For most data engineering teams, the workflow for managing pipeline reliability follows a frustrating pattern: a job fails, an alert triggers, and engineers must then manually trace the error across distributed clusters to fix a problem that has already impacted the business.

This “reactive” model is increasingly becoming a bottleneck for the next wave of technology: Agentic AI. Unlike traditional analytics, which can tolerate slight delays or minor data inconsistencies, agentic AI systems require high-quality, real-time data to function. If a pipeline delivers stale or corrupted data, the AI doesn’t just show a wrong chart—it makes incorrect autonomous decisions.

To bridge this gap, Chicago-based startup Definity is shifting the paradigm by embedding intelligence directly into the execution layer of data pipelines.

The Architecture of Intervention: Inside vs. Outside

The fundamental difference between Definity and existing industry leaders lies in where the monitoring happens.

Traditional monitoring tools—such as Datadog, Unravel Data, or Acceldata—operate from the outside. They observe metrics and system tables after a job has completed. As Definity CEO Roy Daniel explains, this approach is inherently late: “By the time you know something happened, it already happened.” By the time an external tool flags an issue, the compute resources have already been wasted, and the bad data has already flowed downstream.

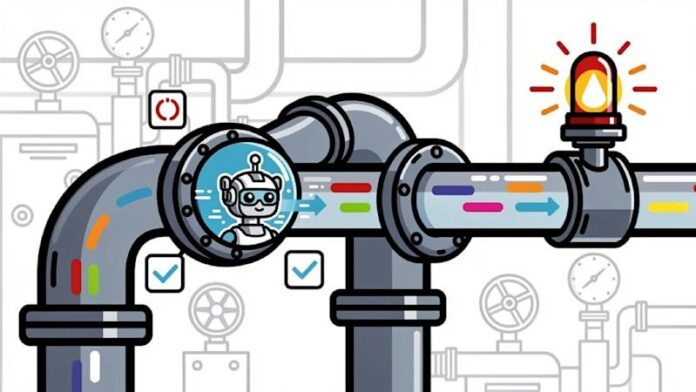

Definity takes a different architectural approach:

- Inline Instrumentation: Instead of watching from afar, Definity installs a JVM agent directly inside the Spark or DBT driver via a single line of code.

- Real-Time Context: Because the agent sits inside the execution layer, it captures live data on memory pressure, data skew, shuffle patterns, and infrastructure utilization as the job runs.

- Active Intervention: Unlike traditional tools that only “read” data, Definity’s agents can “act.” They can modify resource allocation mid-run, stop a job before it propagates errors, or preempt a pipeline if they detect that upstream data is stale.

Real-World Impact: Efficiency Over Elasticity

The value of this approach is most visible in environments where resources are finite. For companies running on-premises infrastructure, the inability to “scale up” instantly in the cloud means that every inefficient query translates directly into wasted hardware costs.

Nexxen, an ad tech platform, serves as a primary case study for this transition. Facing large-scale Spark workloads on-premises, Nexxen’s engineering team struggled not just with failures, but with the mounting cost of inefficiency.

After deploying Definity, the results were immediate:

– Optimization: The team identified 33% of all optimization opportunities within the first week.

– Efficiency: Troubleshooting and optimization efforts were reduced by 70%.

– Capacity: The platform unlocked enough infrastructure capacity to allow for workload growth without the need for new hardware investments.

The New Stakes of Data Engineering

The rise of Definity signals a broader shift in the industry: Data pipeline operations are becoming an AI infrastructure problem.

As data pipelines move from supporting simple dashboards to powering autonomous AI agents, the margin for error has vanished. The transition from “observing” a failure to “preventing” it through in-execution intelligence is no longer just a luxury for optimization—it is becoming a requirement for the reliability of the entire AI stack.

Conclusion

By embedding agents directly into the execution layer, Definity is moving data operations from a reactive “fix-it-later” model to a proactive, real-time system. This shift is critical as enterprises move toward agentic AI, where data integrity is the foundation of autonomous decision-making.