The boundary between human skill and machine precision is blurring. Following recent reports of robots competing in long-distance running, a new breakthrough from Sony suggests that even the most fast-paced, high-reflex sports—like professional table tennis—are no longer out of reach for artificial intelligence.

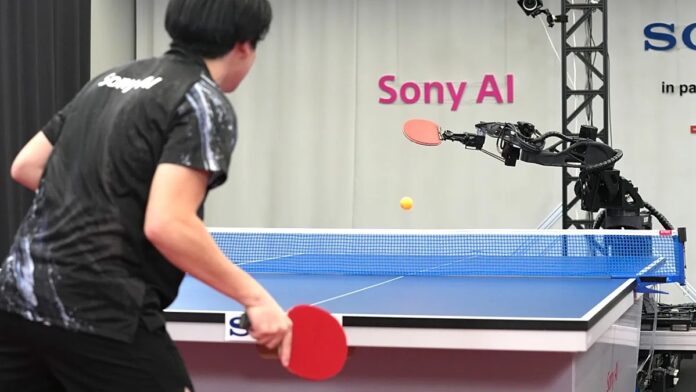

Sony has unveiled Ace, an AI-driven robot capable of defeating professional table tennis players. This achievement marks a significant milestone in robotics, moving beyond simple automation toward true, competitive agility.

The Anatomy of a Digital Athlete

Unlike a human player who relies on two arms and biological vision, Ace utilizes a specialized hardware configuration designed for extreme precision:

- Advanced Vision: The robot uses nine camera “eyes” to track the ball. This allows it to not only follow the ball’s trajectory but to identify its logo and detect complex spin patterns in real-time.

- Mechanical Precision: Ace operates with a single arm equipped with eight joints, providing a range of motion that facilitates rapid, precise strikes.

- Reinforcement Learning: Rather than following a rigid set of pre-programmed instructions, Ace was trained through reinforcement learning. This means the robot “learned” through experience, trial, and error, much like a human athlete refining their technique through practice.

Leveling the Playing Field

A common critique of robotic competition is that machines often “cheat” by using unfair advantages, such as superhuman reaction speeds that bypass the spirit of the game. Sony’s research team, however, aimed for a different goal.

Michael Spranger, President of Sony AI, emphasized that the objective was not just to build a machine that reacts faster than a human, but to build a machine that plays the game. By conducting tests on a standard Olympic-sized court under official rules, Sony sought to create a sense of “fairness.”

The robot’s performance is measured against the standards of elite athletes who train at least 20 hours per week. The goal is to compete on the basis of tactics, decision-making, and skill, rather than mere mechanical speed.

Results and Evolutionary Progress

The development of Ace has been an iterative process. Since the initial study—published in the journal Nature —the team has continued to refine the AI. The results show a clear trajectory of improvement:

1. Increased Speed: Ace has become faster in its movements.

2. Endurance: The robot can now sustain longer rallies.

3. Aggression: The machine has learned to move closer to the table, adopting a more dominant playing style.

In recent testing, Ace faced four highly skilled players and won against all but one.

Why This Matters: The Future of Human-Robot Interaction

This breakthrough raises profound questions about the future of specialized labor and competitive sports. While Ace is currently a research tool, its ability to master “unstructured” environments—where a ball can bounce unpredictably—is a massive leap forward for robotics.

Kinjiro Nakamura, a 1992 Olympian, noted that Ace performed shots previously thought impossible for humans. However, he added a vital perspective: by demonstrating these “impossible” moves, the robot may actually provide a blueprint for how humans can push their own physical limits.

The success of Ace demonstrates that AI is moving from the realm of data processing into the realm of physical mastery, proving that machines can navigate the complex, high-speed nuances of human sports.

Conclusion

Sony’s Ace represents a shift from robots that simply follow commands to robots that learn through experience. By mastering the complex physics of table tennis, Ace proves that AI can achieve professional-level dexterity and tactical intelligence.