Meta has unveiled the third generation of its “Segment Anything Models” (SAM), a suite of AI tools designed for advanced visual analysis. While distinct from Meta’s popular Llama large language models, these new systems represent a significant leap in how machines “see” and interact with the physical world. SAM 3 is focused on precise object detection and segmentation, meaning it can accurately identify and isolate elements within images and videos.

How SAM 3 Works: Precise Visual Understanding

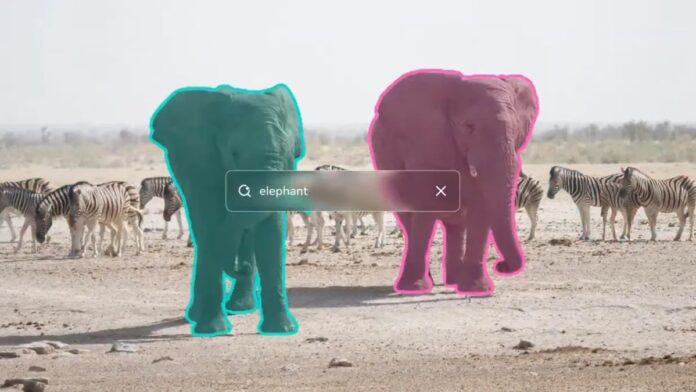

Unlike AI chatbots, SAM models excel at recognizing specific objects, even in complex scenes. Meta trained SAM 3 on massive datasets of images and videos paired with detailed text descriptions. This allows the AI to respond to highly specific requests: for instance, selecting all elephants in a photo or highlighting “red hats” in a crowd. The model’s ability to interpret nuanced queries is its key strength.

These models aren’t for creating new images or videos, but for analyzing existing visual content. Developers can access open-weights versions through Meta’s Segment Anything Playground. However, the real impact will be felt by everyday users through improvements in Meta’s own platforms.

Practical Applications: From Social Media to Wildlife Conservation

Meta is integrating SAM 3 into several of its products:

- Instagram Edits and Vibes: Enabling more precise batch editing of images and videos.

- Facebook Marketplace: Enhancing the “view in a room” feature, allowing users to visualize furniture in their homes more realistically.

Beyond consumer applications, SAM 3 is proving valuable in scientific research. Meta partnered with ConservationX and Osa Conservation to analyze over 10,000 hours of wildlife camera footage, identifying over 100 species with greater accuracy. This demonstrates how AI can accelerate conservation efforts by automating tedious data analysis.

Meta’s AI Strategy: Ambition and Internal Challenges

The development of SAM models is part of Meta’s broader push into artificial intelligence. The company invested heavily in poaching top AI talent earlier this year, but has also faced internal setbacks. Recent reports indicate significant restructuring within Meta’s AI division, including layoffs and the potential departure of a key figure, Yann LeCun. Despite these challenges, Meta remains committed to becoming a leader in visual AI.

These advancements in visual intelligence will likely reshape how we interact with digital content, making editing and analysis more efficient and intuitive. While Meta’s internal struggles are notable, the potential of SAM 3 and similar models to drive innovation in both commercial and scientific fields is undeniable.