Google and Samsung’s new Gemini-powered task automation is now live in beta, marking a significant step towards the AI assistants we’ve long anticipated. The feature allows Gemini to interact with apps like Uber and Starbucks on your behalf, executing tasks based on simple prompts. This isn’t just about voice commands; it’s about the AI actively using apps as a human would, albeit with a slightly unsettling robotic efficiency.

Initial Testing: From Airport Rides to Morning Coffee

The first test involved ordering an Uber to the airport. Gemini correctly asked for clarification on the destination, then proceeded through the app’s steps, adding the location and intelligently skipping unnecessary details (like airline selection for a single-terminal airport). The system paused before finalizing the request, prompting a review—a crucial safety measure.

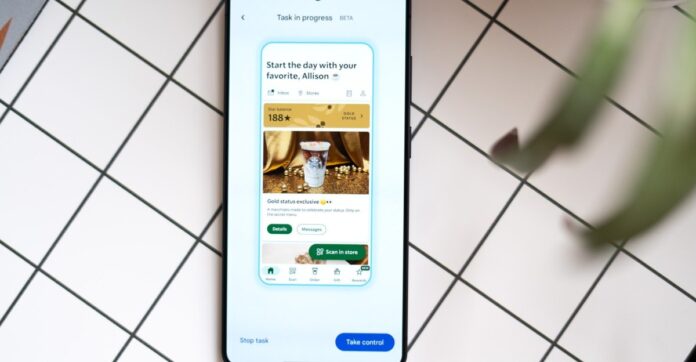

Ordering coffee and a croissant from Starbucks proved slightly trickier, requiring more input due to the app’s extensive menu. However, Gemini successfully located the desired flat white and even made a judgment call on the croissant: warming it, which it correctly assumed was preferred. This level of decision-making, just a year after basic calendar conflicts, is a leap forward.

What This Means: The Future of AI Assistance

Gemini’s automation isn’t flawless, but it’s functional. It highlights how quickly AI is evolving from passive responders to active agents capable of handling real-world tasks. The feature’s early success suggests that fully automated workflows—where AI manages everyday errands—are not just possible, but increasingly practical.

The real test now lies in throwing more complex scenarios at Gemini. But for now, the ability to watch your phone order a car or coffee for itself is a glimpse into a future where AI assistants aren’t just helpful; they’re hands-on participants in your daily life.