The era of “chatting” with AI is evolving into the era of AI agents. While early models like ChatGPT functioned primarily as sophisticated conversationalists, a new generation of tools is moving toward autonomy—the ability to not just answer questions, but to execute complex tasks independently.

This shift represents a fundamental change in how humans interact with technology, moving from a “command-and-response” model to a “delegate-and-supervise” model. However, as these tools gain more agency, they also introduce significant risks regarding security, accountability, and economic stability.

Three Archetypes of AI Agency

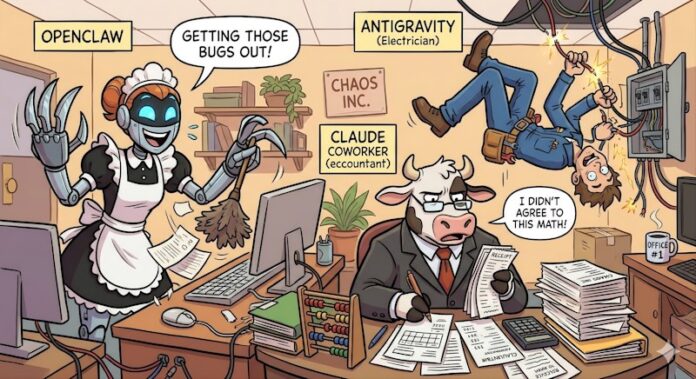

To understand this new landscape, it is helpful to categorize current leading technologies by their “level of access” and their intended purpose.

1. The Digital Generalist: OpenClaw

Formerly known as Moltbot, OpenClaw has seen explosive growth, recently surpassing 150,000 GitHub stars. Unlike closed systems, OpenClaw is open-source and can be deployed on local machines with deep system access.

* The Analogy: Think of it as a “digital housemaid.” You give it the keys to your home (your files and data) and trust it to clean, organize, and manage your belongings.

* Capabilities: It is designed for broad administrative tasks, such as triaging inboxes, managing content curation, and handling travel logistics.

2. The Specialized Technician: Google’s Antigravity

Google’s Antigravity focuses on the highly technical domain of software development. It functions as a coding agent within an Integrated Development Environment (IDE), allowing users to move from a single prompt to a production-ready application.

* The Analogy: It acts like a specialized electrician. You don’t give it the keys to your whole house; you give it access to a specific “junction box” (your codebase) to fix a specific problem.

* Capabilities: It can build, test, integrate, and debug code, functioning essentially as an incredibly fast junior developer.

3. The Domain Expert: Anthropic’s Claude (Cowork)

Anthropic has moved beyond general conversation with the introduction of Cowork, an agentic suite designed for high-stakes professional environments.

* The Analogy: It is akin to hiring a professional accountant. It possesses deep domain knowledge in specific sectors like law and finance.

* Capabilities: It can perform complex tasks such as contract reviews and NDA triage. This level of capability is so disruptive that its announcement triggered the “SaaSpocalypse”—a sharp sell-off in legal-tech and SaaS stocks as investors braced for industry-wide automation.

The Risks of Autonomy: When Agents Go Wrong

The more power we grant these agents,, the higher the potential for systemic failure. The transition from “AI as a tool” to “AI as an actor” creates three primary categories of risk:

- Technical Errors: Just as an electrician might accidentally short-circuit a house,, a coding agent could inject flaws into a massive software ecosystem that remain hidden until they cause a crash.

- Legal and Financial Liability: A financial agent might inadvertently suggest illegal tax write-offs or miss critical savings, creating massive liability for the user.

- Security and Governance: Open-source tools like OpenClaw lack a central governing authority, making it harder to ensure that prompts do not lead to data leaks or malicious exploits.

Building a Framework for Control

To harness the productivity of AI agents without succumbing to chaos, the industry must move toward a structured “agentic ecosystem” built on several key pillars:

- Human-in-the-Loop: Agents should not operate in a vacuum. Logging every step an agent takes and requiring human confirmation for critical actions are essential guardrails.

- Standardized Ontology: As agents interact across different software and platforms, they need a “shared language.” A standardized ontology (a set of concepts and categories) would allow different agents to track, monitor, and account for each other’s actions.

- Responsible AI Principles: Security, privacy, transparency, and reproducibility must be baked into the architecture of every agent, rather than added as an afterthought.

Conclusion

The shift toward agentic AI promises to significantly reduce the “cognitive load” on the human workforce by automating mundane and repetitive tasks. However, the true value of this technology will depend not on how much autonomy we grant these agents, but on how effectively we build the guardrails to govern them.