Databricks has unveiled KARL (Knowledge Agents via Reinforcement Learning), a new AI agent designed to overcome the limitations of traditional Retrieval-Augmented Generation (RAG) pipelines. Most enterprise RAG systems excel at one type of search, failing silently when confronted with others. A model optimized for report synthesis will struggle with precise entity retrieval, while a lookup-focused model will falter on multi-step reasoning. KARL aims to solve this by being trained to handle six distinct enterprise search behaviors simultaneously.

The Problem with Current RAG Systems

Existing RAG systems are brittle. They are typically fine-tuned for specific search tasks, leaving them vulnerable when faced with real-world complexity. An agent trained for simple question answering may collapse when tasked with reconstructing fragmented internal records or synthesizing intelligence from unstructured meeting notes. This inflexibility forces teams to build separate pipelines for each use case, creating maintenance overhead and siloed knowledge access.

How KARL Works: Multi-Task Reinforcement Learning

Databricks trained KARL using a novel reinforcement learning (RL) algorithm, achieving performance comparable to Claude Opus 4.6 at a 33% lower cost per query and 47% lower latency. Crucially, the model was trained entirely on synthetic data generated by itself, eliminating the need for expensive human labeling. This was possible because of OAPL, an Optimal Advantage-based Policy Optimization with Lagged Inference policy, which Databricks co-developed with researchers from Cornell and Harvard.

The key innovation of OAPL is its stability in distributed training environments. Unlike traditional LLM RL approaches, it handles policy lags effectively, allowing for sample-efficient training and reducing GPU costs. This makes the model viable for realistic enterprise deployment.

Six Enterprise Search Behaviors Handled by KARL

To evaluate KARL, Databricks created KARLBench, a benchmark assessing performance across six critical enterprise search behaviors:

- Constraint-driven entity search: Retrieving specific entities under strict conditions.

- Cross-document report synthesis: Combining information from multiple sources into a coherent report.

- Long-document traversal with tabular reasoning: Extracting insights from large documents with numerical data.

- Exhaustive entity retrieval: Identifying all relevant entities within a given dataset.

- Procedural reasoning over technical documentation: Following step-by-step instructions from complex manuals.

- Fact aggregation over internal company notes: Combining fragmented data to answer complex questions.

KARL demonstrated strong generalization, performing well on tasks it was never explicitly trained on, unlike standard RAG systems.

The Compression Layer: Context Management

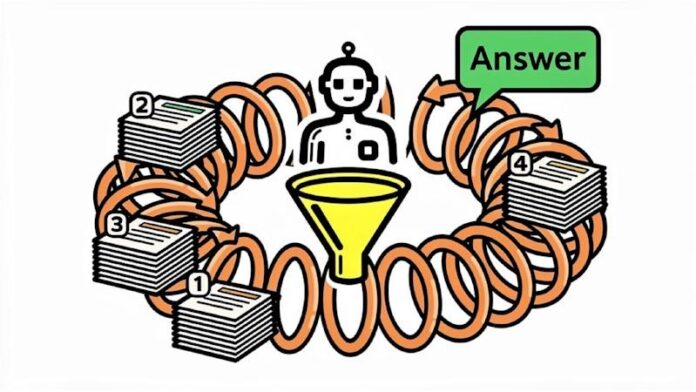

A major challenge in enterprise search is managing the context window of Large Language Models (LLMs). Traditional RAG systems either rely on massive vector databases or force LLMs to process too much information at once. KARL addresses this by learning to compress its own context end-to-end through RL. When context exceeds the LLM’s limits, the agent compresses it, retaining accuracy while staying within boundaries. Without this compression, the model’s performance drops significantly.

Limitations and Future Roadmap

KARL struggles with queries with inherent ambiguity, where multiple valid answers exist. The model sometimes gives up on complex queries early, which Databricks argues is often the correct behavior for cost efficiency. Currently, KARL only supports vector search; integration with SQL databases, file systems, and Python-based calculations is planned for future development.

Implications for Enterprise Data Teams

KARL highlights three crucial considerations for teams building their retrieval infrastructure:

- Pipeline architecture matters: Narrowly optimized RAG pipelines will fail on diverse query types.

- Reinforcement learning is key: Distillation from expert models cannot match the generalization capabilities of RL-trained agents.

- Efficiency is more than cost: Purpose-built search agents complete tasks faster, reduce wasted queries, and compress context effectively.

Building a model that knows how to search is more valuable than simply routing everything through general-purpose APIs.